- This event has passed.

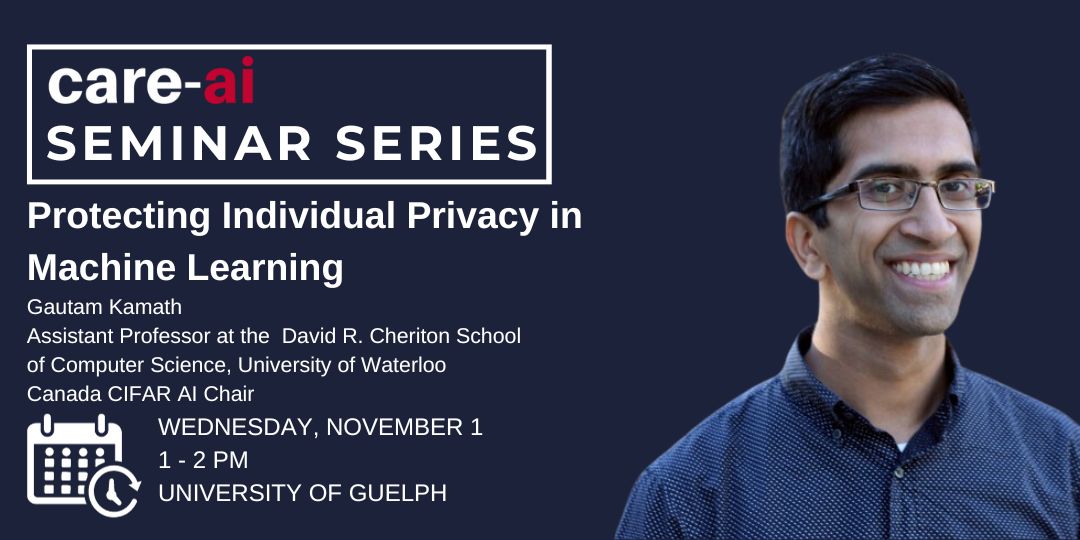

Wednesday, November 1, 2023 @ 1:00 pm - 2:00 pm

Abstract: Modern machine learning systems are trained on massive amounts of data. It turns out that, without special care, machine learning models are prone to regurgitating or otherwise revealing information about individual data points. This is problematic when parts of the training data are sensitive or contain private information, as is commonly the case in many settings of interest. Dr. Kamath will discuss differential privacy, a rigorous notion of data privacy, and how it can be used to provably protect against such inadvertent data disclosures by machine learning models. He will focus particularly on recent approaches that simultaneously employ both non-sensitive and sensitive data, granting significant improvements in the utility of privately trained models. He will also discuss pitfalls with these methods and potential paths forward for the field.

Bio: Gautam Kamath is an Assistant Professor at the David R. Cheriton School of Computer Science at the University of Waterloo, and a Canada CIFAR AI Chair and Faculty Member at the Vector Institute. He has a B.S. in Computer Science and Electrical and Computer Engineering from Cornell University, and an M.S. and Ph.D. in Computer Science from the Massachusetts Institute of Technology. He is interested in reliable and trustworthy statistics and machine learning, including considerations such as data privacy and robustness. He was a Microsoft Research Fellow, as a part of the Simons-Berkeley Research Fellowship Program at the Simons Institute for the Theory of Computing. He serves as an Editor in Chief of Transactions on Machine Learning Research. He is the recipient of the Faculty of Math Golden Jubilee Research Excellence Award, an NSERC Discovery Accelerator Supplement, and was awarded the Best Student Presentation Award at the ACM Symposium on Theory of Computing.